Regular expressions are a fantastic tool when you’re looking for patterns in time series. I wish I’d realised that sooner.

Here’s a timely example: traditionally, when you have two successive quarters of negative GDP growth, you’re in recession. We have a quarterly GDP time series for Australia, and we want to know how many recessions they’ve been having down under. How do we count these events?

Of course you can always write a loop, but it’s clumsy. You can use the “embed” function, that’s much better in a lot of cases. Or you could use regexps. Here’s how.

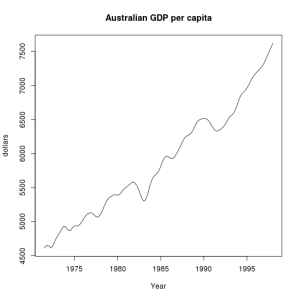

First, our Australian GDP data, courtesy of the expsmooth package:

plot(ausgdp, main = "Australian GDP per capita", ylab = "dollars", xlab = "Year")

(caveat: the series is per capita GDP, not absolute GDP, so my recession count might be off).

Regexps find patterns in character strings. They run the Web, pretty much, so googling “regexp tutorial” should turn up about a million results. Patterns are specified using a mini-language, for example “1.1” will match all strings of length three of the form “1 followed by anything followed by 1”. In R you can use gregexpr to find matches:

txt = "54131x1blahblah"

gregexpr("1.1", txt)

## [[1]] ## [1] 3 ## attr(,"match.length") ## [1] 3 ## attr(,"useBytes") ## [1] TRUE

The great advantage of regexps over other techniques is that they can match patterns of variable length. In the case of recessions that’s exactly what we want.

We turn our GDP time series into a sequence of 1s and 0s, where 1 means GDP increased in that quarter:

delta = (sign(diff(ausgdp)) == 1) + 0 head(delta)

## [1] 1 0 0 1 1 1

regexps work on character strings, so we paste everything together:

ds = do.call(paste0, as.list(c(delta))) ds

## [1] "1001111110001101111111000111111110111111100000111111111110011111111111111100000011111111111111111111111111"

(does anyone know a more elegant way to do this conversion?)

The pattern we’re looking for is two consecutive zeros or more: the appropriate regexp is “00+”, which matches patterns that have at least two zeros.

By default the regular expression engine will find the maximal match. What this means is that in the string “1100011”, it will consider that there is only one match: the substring “000”, and not two successive “00” matches. That’s not always the behaviour we want, but in the case of recessions it’s exactly what we need.

gregexpr will return all the matches, along with the lengths of the matched segments (that’s how long recessions lasted):

matches = gregexpr("00+", ds, perl = T)[[1]]

matches

## [1] 2 10 23 42 58 75 ## attr(,"match.length") ## [1] 2 3 3 5 2 6 ## attr(,"useBytes") ## [1] TRUE

We can use these matches to highlight recessions. The following code outputs a list of indices in the ausgdp time series that correspond to a recession:

m.length = attr(matches,"match.length") recessions = sapply(1:length(matches),function(ind) matches[ind]+0:(m.length[ind]))

and we can now highlight the corresponding regions in the plot:

hl = function(inds) lines(time(ausgdp)[inds], ausgdp[inds], col = "red", lwd = 3) plot(ausgdp, main = "Australian GDP per capita", ylab = "dollars", xlab = "Year") tmp = sapply(recessions, hl) #Used for side-effects only

That’s it. Things get a bit trickier when you have patterns that might be overlapping, for example when you’re interested in extracting all patterns of the form “1.1”. See that question on stack overflow for a solution involving look-ahead.

[Note: updated following comment by DataMeister. Fixed variable name]

Very neat idea, regex would never have occurred to me.

On your int list to string conversion, you should the collapse parameter of paste:

> set.seed(10)

> xs xs

[1] 1 0 0 1 0 0 0 0 1 0

> paste(xs, collapse=””)

[1] “1001000010”

>

Nice, didn’t know that!

ds <- paste(delta, collapse = "")

Hi Simon,

Have you considered rle, the run length encoding function? It’s a bit obscure but in base R. You have to coerce your time series to numeric though.

runs 1. You’ll have to

cumsum the lengths to recover the positions in the original series. I don’t know

if it’s worth it or as neat as your solution but it’s what I would have thought of

trying first.

Hi Ken! Agreed, rle works fine in this case. Regexps are a lot more flexible but sometimes they’re overkill. Also they make your code hard to decipher if you’re not used to them.

They tend to be extremely fast, though, so I wouldn’t be surprised if they worked a lot better than pure R code on long series.

“Some people, when confronted with a problem, think ‘I know, I’ll use regular expressions.’ Now they have two problems.” – Jamie Zawinski

I’m relatively new to R. When I applied this code to weekly gold prices, I came up with this error.

Error in lapply(X = X, FUN = FUN, …) : object ‘m.ind’ not found

This is the code…

goldbear = sapply(1:length(m.ind), function(ind) m.ind[ind] + 0:(m.length[ind]))

You’re right, my bad, some code was missing in between two code blocks. Should work now.

Many Thanks. Works like a charm

Reblogged this on The Quant Guy and commented:

A nice way of detecting Recessions in R…

Interesting post.

Also you can as.numeric delta instead of adding 0

delta = as.numeric(sign(diff(ausgdp)) == 1)

Adding 0 should be fine if it’s integer but you may get into some floating point problems in other cases – but you should not when you’re pasting.